The AI Shipping Paradox: Why Your 10x Developer Is Now a 1x Reviewer

A quick disclosure: I think tech is getting something wrong about AI and engineering productivity. I have seen this first hand and wanted to share my experience in hope it helps others. I used AI to help me gather data on my own company and draft part of this piece.

Two projects with opposite outcomes

Kapwork ran two projects in a similar timeframe.. One missed its target by 7.5x. The other shipped on time. Two things were different on the one that slipped: no single owner, and Claude Code launched in the middle of it. Why did this happen?

AI coding tools amplify whatever dynamics already exist on your team.

The tools have created the illusion of capacity but throughput is constrained by the system itself, not to the use of AI tools. Give AI tools to a small team optimized for AI development and they ship faster. Give the same tools to a team where many developers touch the same codebase and you get more code, more conflicts, and more cleanup. Developers that used to ship 10x, now spend their day reviewing the AI-generated output of five other people.

Our ability to coordinate that many contributors was always the constraint. AI just made the constraint invisible until its use augmented the system constraints by an order of magnitude. I mean it. An order of magnitude. \

While AI multiplies everyone’s output, so does the cost to coordinate that output. In fact, it grows faster than linearly with the number of contributors. Resolving conflicts. Debugging integration failures. Reviewing pull requests. Double the volume of code entering a shared codebase and you more than double the cleanup cost.

Your top developers' 10x productivity boost gets eaten by a 20x increase in remediation work.

Projects by the numbers

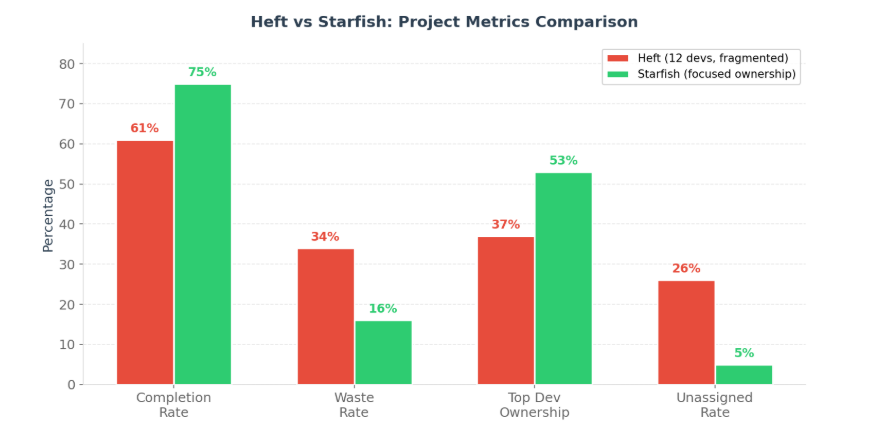

We pulled data from our Linear instance and compared the two projects side by side.

Project Heft was a cross-cutting product launch. Twelve developers touched the codebase. Project Starfish was a coverage and verification module. One developer completed 48 of 91 issues, with a small supporting cast.

Heft had a 26% unassigned issue rate. Starfish had 5%. Work was being created on Heft faster than anyone could triage and own it. AI-accelerated output was outpacing our organizational capacity to absorb it. Heft’s waste rate (tickets cancelled or marked duplicate): 34%. Starfish: 16%.

The same developers who produced clean, shippable work on Starfish produced conflicting, duplicated work on Heft.

Heft missed its target date by 7.5x while Starfish shipped on schedule. In all fairness, Heft had a lot more moving parts and therefore complexities than Starfish but with the AI hype around Claude Code and Opus 4.6 we felt more ambitious to take on a much more ambitious scope.

Don't fall for: “Since we can move faster now, we can handle more complexity.”There are always unintended consequences.

What didn’t work.

There are three consistent patterns all related to work coordination between developers:

Completed features that were broken. The email template integration is the clearest example. Four developers touched one feature. When our QA lead tested it, 8 of 10 SendGrid template IDs were still set to PLACEHOLDER in the codebase. The frontend referenced templates that the backend never pushed. Each developer’s individual work passed local validation. The feature broke where their independent codebase met.

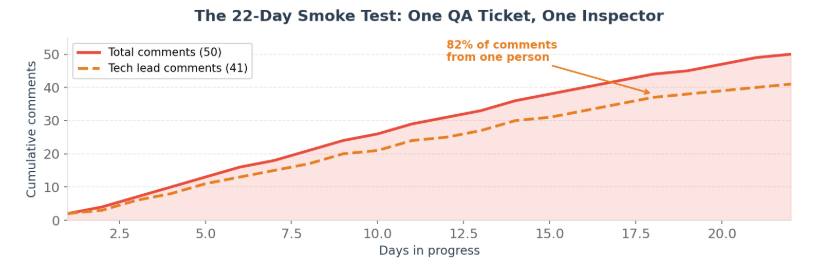

A 22-day smoke test. One QA ticket sat in progress for 22 straight days. It accumulated 50 comments from 4 contributors. Our product designer, acting as QA lead, posted 41 of them. He had become, in practice, a full-time quality inspector for the entire project. Every time AI helped a developer ship code faster, it generated more work for him to review.

Corrupted test infrastructure. The testing environment itself started failing. Test agents built against older releases broke when run against newer code. Fresh test data was needed for every cycle but the person doing QA was overwhelmed, and the tools he relied on were degrading under the pace of change.

These same people did fine on Starfish. The variable was the organization, not the engineers.

What to do about it

AI has given every developer a faster machine without improving the production process itself. And the instinct of most organizations, to add more QA, more review, more oversight, is EXACTLY what you need to avoid.

If you need a separate QA team, ask why. The answer usually points to a deeper problem with how ownership is distributed. In the age of AI, the coordination required under a fragmented ownership model where many developers are working on the same feature, does not work. Developers need to own a feature end to end and own their own quality. When one developer owns a module end to end, they have the context to test it thoroughly. They built it. They know the integration points. They know what will break. Separate QA becomes unnecessary.

So for us, the most critical thing is having the capacity to change and adapt the organizational topology at the speed that these new tools are being launched so that we can maximize throughput and take full advantage of them. The issue in other words, is not only learning to use the tools but more importantly, where will the constraints emerge as your developer throughput increases.

Some things to watch out for:

- Ownership concentration. How many developers touch the same module? If more than two or three people regularly commit to the same area of the codebase, AI tools will amplify the coordination cost.

- Waste rate. What percentage of tickets get cancelled or marked duplicate? Anything above 15-20% suggests that AI-accelerated output is creating work faster than it can be triaged.

- Review bottleneck. Is your best developer building or reviewing? If they spend more than a third of their time on code review, they have been converted from a builder into an inspector.

- Handoff points. Where are the seams between developers? Look at features that require multiple people to complete. Those seams are where AI-generated code breaks.

- Deploy frequency versus defect rate. Are you shipping faster but breaking more? The ratio tells you whether AI is adding net capacity or just adding noise.

- QA structure. Does a separate QA team exist because individual developers cannot test their own work? If so, the ownership model prevents self-testing, and AI will make the QA bottleneck worse.

The company with fewer developers and clear module ownership will outship the company with 20 developers all committing to the same monolith. I learned this the hard way. The highest-leverage investment in the AI era is better organizational design.

On Pennarun’s argument

Avery Pennarun published a great piece called "Every Layer of Review Makes You 10x Slower." His core insight was that when AI drops code generation time from 30 minutes to 3 minutes, the review layers (code review, QA, staging, production validation) still take exactly as long and the fact that each layer acts as a multiplier on delay. Makes total sense, but it places the problem at the review layer.

IN OUR CASE I would argue that that is a symptom of something else and while it is not addressed, it will amplify whatever dynamics already exist. In other words, it is a symptom, not the root cause.

With AI tools, code is generated faster than ever. By the time that code hit a reviewer, it had already been touched by people who didn't know what the others built. The review layer didn't create that mess, it revealed a system flaw that goes beyond review layers. Start by reviewing how work is assigned, not having less review layers.